Computing History | Vibepedia

The history of computing is a sprawling narrative tracing humanity's relentless drive to automate calculation and information processing. It encompasses…

Contents

Overview

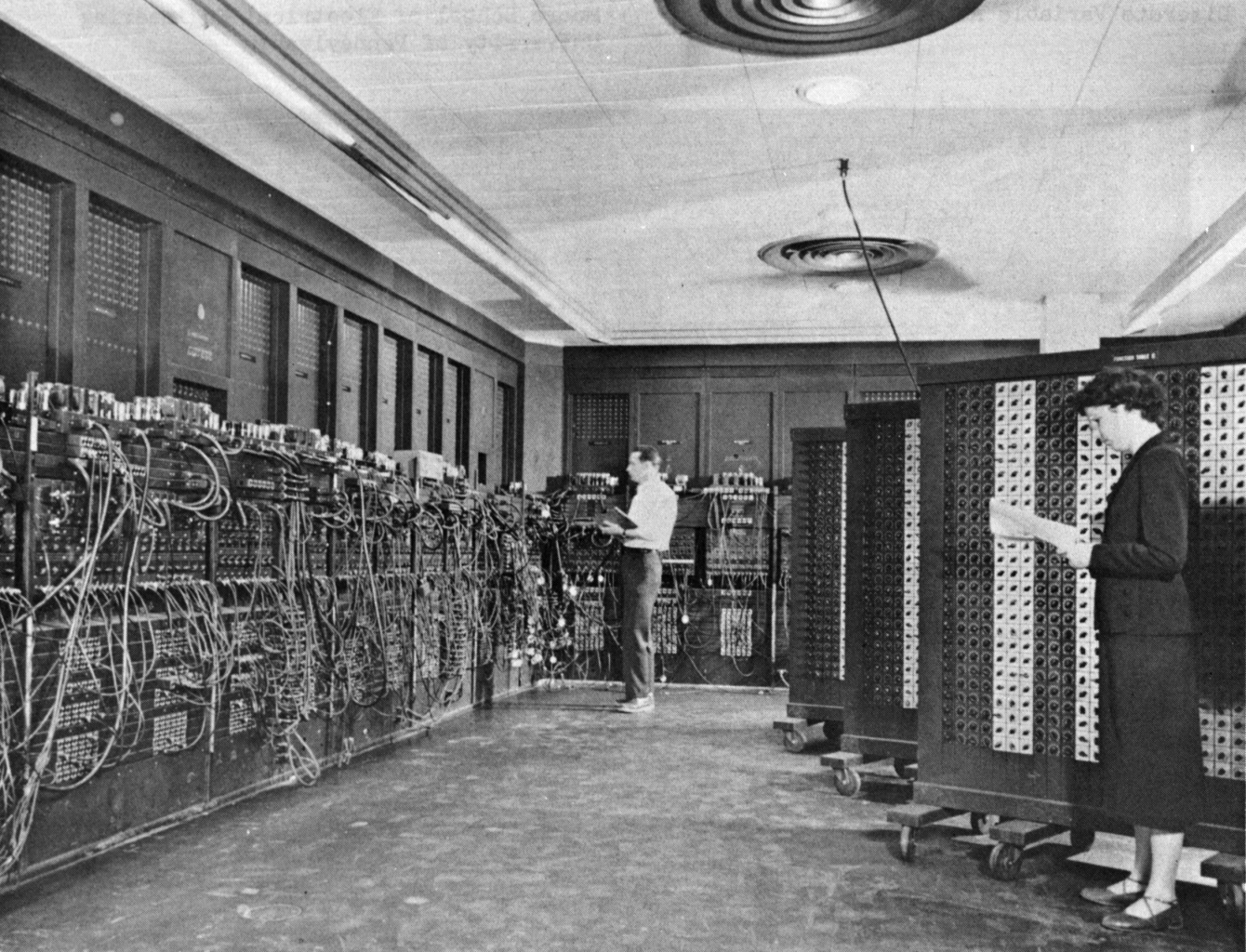

The history of computing is a sprawling narrative tracing humanity's relentless drive to automate calculation and information processing. It encompasses mechanical marvels like Charles Babbage's Analytical Engine and the early electromechanical relays of Howard Aiken's Harvard Mark I. The mid-20th century saw the dawn of electronic computing with machines like the ENIAC and UNIVAC, ushering in the era of vacuum tubes and punch cards. This evolution accelerated dramatically with the invention of the transistor and the subsequent development of the integrated circuit by Jack Kilby and Robert Noyce, paving the way for personal computers and the digital revolution. Today, computing history is a vibrant field of study, constantly re-evaluating its past through the lens of artificial intelligence, quantum computing, and the pervasive influence of the internet.

🎵 Origins & History

Early civilizations developed rudimentary tools like the abacus to aid in arithmetic. The Renaissance saw the invention of mechanical calculators by figures such as Blaise Pascal and Gottfried Wilhelm Leibniz. A pivotal, though unrealized, precursor to modern computing was Charles Babbage's Analytical Engine, conceived in the 1830s, which laid out the fundamental principles of a programmable machine, including memory and a central processing unit. The late 19th and early 20th centuries witnessed the rise of electromechanical devices, such as Herman Hollerith's tabulating machine used for the 1890 US Census, which employed punched cards and laid the groundwork for IBM.

⚙️ How It Works

The 'how it works' of computing history isn't a single mechanism but a progression of fundamental principles applied through evolving technologies. Early mechanical calculators, like Pascal's, used gears and wheels to perform arithmetic operations. Babbage's Analytical Engine was designed to be programmable using punched cards, a concept that would dominate computing for a century. The transition to electromechanical computing involved relays and switches, as seen in Howard Aiken's Harvard Mark I. The true revolution began with electronic computing, utilizing vacuum tubes to perform calculations at unprecedented speeds, as exemplified by the ENIAC. The invention of the transistor by John Bardeen, Walter Brattain, and William Shockley at Bell Labs dramatically reduced size, power consumption, and heat, leading to second-generation computers. The subsequent development of the integrated circuit by Jack Kilby and Robert Noyce allowed for the miniaturization of entire circuits onto a single chip, paving the way for the microprocessors that power modern devices.

📊 Key Facts & Numbers

The sheer scale of computing history is staggering, marked by exponential growth and transformative numbers. The first electronic general-purpose computer, ENIAC, was a massive machine. A modern smartphone, costing around $1,000, is millions of times faster and possesses vastly more memory. The number of transistors on a single chip has grown from a few thousand in early Intel microprocessors like the 4004 to over 100 billion in contemporary CPUs. The global semiconductor market, a direct descendant of this history, was valued at over $500 billion in 2022. The number of internet users worldwide surpassed 5 billion by early 2023, a testament to the interconnected computing infrastructure that has reshaped global communication and commerce since the advent of the World Wide Web.

👥 Key People & Organizations

Computing history is a rich tapestry woven by brilliant minds and ambitious organizations. Key figures include Charles Babbage and Ada Lovelace, who conceptualized programmable computation in the 19th century. Early 20th-century pioneers like Alan Turing developed theoretical foundations for computation. The post-war era saw the rise of pioneers like John von Neumann, whose architecture remains foundational, and the development teams behind early machines like ENIAC at the University of Pennsylvania. Major organizations such as IBM, Xerox PARC, Bell Labs, Intel, and Microsoft have been instrumental in developing hardware, software, and operating systems. More recently, figures like Steve Jobs and Bill Gates popularized personal computing.

🌍 Cultural Impact & Influence

The impact of computing history on culture is immeasurable, fundamentally altering how humans communicate, work, learn, and entertain themselves. The advent of the personal computer democratized access to computing power, moving it from large institutions to homes and offices. The proliferation of the internet and the World Wide Web has created a globally interconnected society, fostering new forms of social interaction, commerce, and information dissemination. Digital art, music production, and video games, all direct descendants of computing advancements, have become dominant cultural forces. The rise of social media platforms like Facebook (now Meta) and Twitter (now X) has reshaped public discourse and political movements, demonstrating the profound societal shifts driven by computing technology.

⚡ Current State & Latest Developments

The current state of computing history is characterized by rapid advancements and the convergence of multiple technological frontiers. The relentless pursuit of Moore's Law, though facing physical limitations, continues with innovations in chip architecture and specialized processors like GPUs for AI and TPUs for machine learning. The field of artificial intelligence, particularly deep learning, is experiencing a renaissance, building upon decades of theoretical work and fueled by massive datasets and computational power. Quantum computing, once a theoretical curiosity, is now seeing significant investment and development from companies like IBM and Google, promising to revolutionize fields like cryptography and materials science. The ongoing digital transformation across all industries, driven by cloud computing and the Internet of Things, ensures that the legacy of computing history is actively being written.

🤔 Controversies & Debates

Computing history is not without its controversies and debates, often centering on who deserves credit and the societal implications of technological progress. The 'inventor' of the computer is a hotly debated topic, with arguments for Charles Babbage, Alan Turing, John Atanasoff, and the ENIAC team, among others. The ethical implications of artificial intelligence, from job displacement to algorithmic bias, are a major point of contention, raising questions about the responsible development of these powerful technologies. Debates also rage over the environmental impact of massive data centers and the energy consumption of cryptocurrency mining, highlighting the unintended consequences of computational progress. Furthermore, the digital divide, the gap between those with and without access to computing resources, remains a persistent social and economic issue.

🔮 Future Outlook & Predictions

The future outlook for computing history is one of accelerating complexity and transformative potential. Experts predict continued exponential growth in processing power, albeit through novel architectures beyond traditional silicon. Quantum computing is poised to move from experimental labs to practical applications within the next decade, potentially breaking current encryption standards and enabling new forms of scientific discovery. The integration of AI into virtually every aspect of life, from personalized medicine to autonomous transportation, will continue to accelerate, raising profound questions about human agency and societal structure. The development of brain-computer interfaces and advanced robotics suggests a future where the lines between human and machine may blur further, pushing the boundaries of what computing can achieve and what it means to be human.

💡 Practical Applications

The practical applications stemming from com

Key Facts

- Category

- history

- Type

- topic